Hello again. It’s good to be back with Blog 2.0 and our new focus on CISO Decision Intelligence.

The question we hear most from security leaders isn’t technical. It’s this: how do I stay ahead of a threat environment moving faster than my team can process? This post addresses that question.

We introduce the CyberSec Centaur, a human-AI teaming model, and explain how it supports both enterprise and employee resilience. We begin with the drivers behind the shift toward cyber resilience as a security operating model. With that foundation, we explain Centaur teaming concepts for cybersecurity, enterprise risk management, and employee skills development. We then demonstrate a Centaur use-case for CISO Decision Intelligence using our VectorBlack system. We close with our outlook on how human-AI teaming in enterprise cybersecurity will evolve over the next two years.

Cyber Resilience

The World Economic Forum defines cyber resilience as “an organization’s ability to minimize the impact of significant cyber incidents on its primary goals and objectives.” [1] With AI and digital transformation now universal, cyber resilience has become a strategic leadership issue, not just a security issue. [2,3]

In large organizations, the CISO is the senior executive responsible for implementing the resilience strategy. Embracing cyber resilience means moving away from traditional prevention models toward an operating model designed to absorb and recover from disruptive attacks. [4] Technical controls alone cannot get an organization there. Resilience requires cultural leadership and organizational change – spanning departments and levels, from the C-suite to the CISO’s team, and extending to partners, vendors, and supply chains. [5]

The threat is immediate. Anthropic’s Mythos and Glasswing initiative has been major news since April 7th. [6] Google’s Threat Intelligence Group reported on May 11th that nation-state actors are moving from nascent AI-enabled operations to industrial-scale use of generative models and agentic workflows, and are now experimenting with agentic attacks against AI development tooling, including OpenAI’s OpenClaw. [7]

Cyber Centaurs

The threat is recognized at the highest levels of industry and government. Palo Alto Networks CEO Nikesh Arora states plainly that adversaries hold an asymmetric advantage, and defenders must fight AI with AI. [8] Lt. Gen. Michele Bredenkamp of NGA describes a different response: pair every employee with AI tools and training, apply AI where it has a genuine edge – coding, research, speed – and keep humans in the loop for the critical thinking and judgment no model reliably replicates. [9]

Human-AI pairing has a name borrowed from Greek mythology: the centaur, a half-human, half-horse hybrid symbolizing a symbiotic union of distinct capabilities. Cybersecurity researchers and practitioners have adopted the term for human-AI collaboration in knowledge work. The centaur is not a human using a tool. It is a working relationship with an explicit division of labor. [10, 11]

The Human Half Still Matters: AI models have improved dramatically, but carry known failure modes that matter in high-stakes environments. Chatbots produce factually incorrect output and will defend wrong answers without pushback. Agents drift; deviating from intended tasks incrementally, and invisibly, until the compounding effect surfaces in a consequential decision. Machine learning models inherit bias from training data and degrade silently over time.

Resilience is a human skill as well as an organizational property. A CISO who builds a team conditioned to accept AI outputs uncritically, what some researchers call cognitive surrender, without the baseline judgment to recognize when those outputs are wrong, is building institutional fragility. Forbes reports that AI use without discipline erodes independent judgment. [13] That erosion is hardest to detect in junior team members who may never develop analytical instincts if AI does that work for them.

Good Centaur Practice: The best AI users treat AI as a thinking tool, not a production tool. They use it to test assumptions, evaluate alternatives, and challenge their own reasoning. Top performers ask better questions and apply more skepticism to the answers. [14]

For CISOs, translating this into team practice requires a plan that might stipulate:

- developing function-specific (e.g. SOC incident response, threat hunting, threat intelligence teams) guidance and training, rather than a single enterprise policy;

- using AI itself to build continuous, scenario-based training that replaces or complements annual compliance exercise models;

- developing playbooks and mapping workflows to identify where AI genuinely adds speed without displacing the human judgment that makes outputs trustworthy;

- making verification a professional standard, not a suggestion, so that the Centaur relationship remains one of human direction and AI execution.

Resilience, in this framing, is something a CISO can model, teach, and measure.

CISO Decision Intelligence

As CISOs carry accountability for their organization’s cyber resilience strategy and execution, we built VectorBlack to address the Decision Intelligence needs specific to CISOs in large organizations. VectorBlack is an AI-native application that combines machine learning, generative AI, and agents to support CISO decision-making. It represents the AI half of the Centaur in our use-case.

VectorBlack is designed for security leaders who face a common challenge: information overload. With thousands of security articles published daily, dozens of CVEs announced each week, and constant pressure from boards and executives, CISOs need a system that filters noise to surface what matters, translates technical threats into business risk language, predicts which threats will reach executive attention, and provides data-backed justification for security spend.

VectorBlack helps CISOs anticipate and respond to information requests from senior executives concerning risk, threat identification, and sector targeting. For Executive Risk Questions (ERQs), it positions the CISO to demonstrate three things:

- Awareness: knowing what’s happening;

- Impact: understanding what it means; and

- Action: knowing what to do.

VectorBlack’s News Intelligence service continuously collects, analyzes, clusters, tags, indexes, and scores reporting from 23,000 sources. The CEO Predictor feature uses AI to analyze media coverage, trending velocity, and sector relevance to predict which security threats the CEO is likely to raise in the next seven days. It then uses generative AI to prepare CEO-ready prep cards in Q&A format and generate PDFs for board meetings or quick reference.

The following use-case illustrates how this works. On May 12, 2026 at 5:52 PM, Wired was among the first to report a significant ransomware attack on Foxconn, with supply chain exposure for Apple, Nvidia, Google, and Dell. [15]

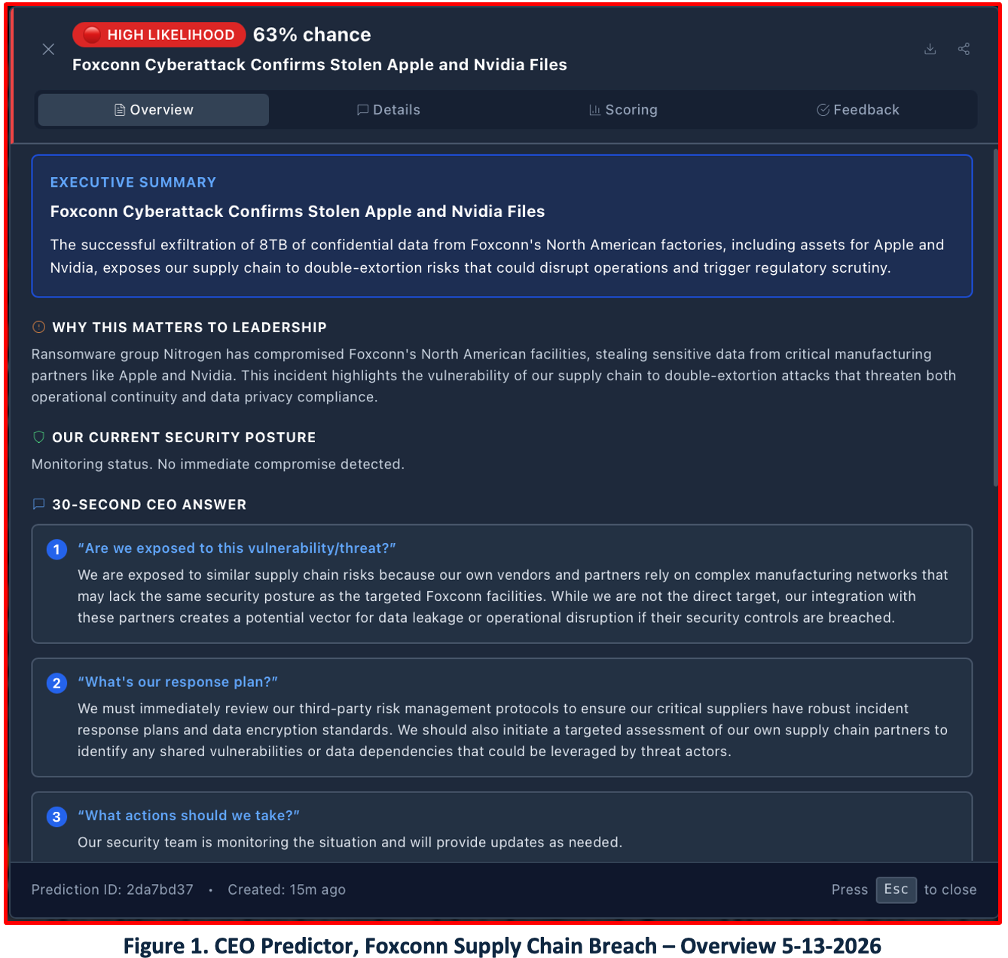

Figure 1 shows a CEO Predictor prep card generated on May 13, 2026 at approximately 2:30 PM. The card shows a 63% probability prediction that the CEO will ask about this event – rated High Likelihood – along with an AI-generated summary demonstrating CISO awareness, organizational impact assessment, and an action plan. A PDF export is available for board distribution.

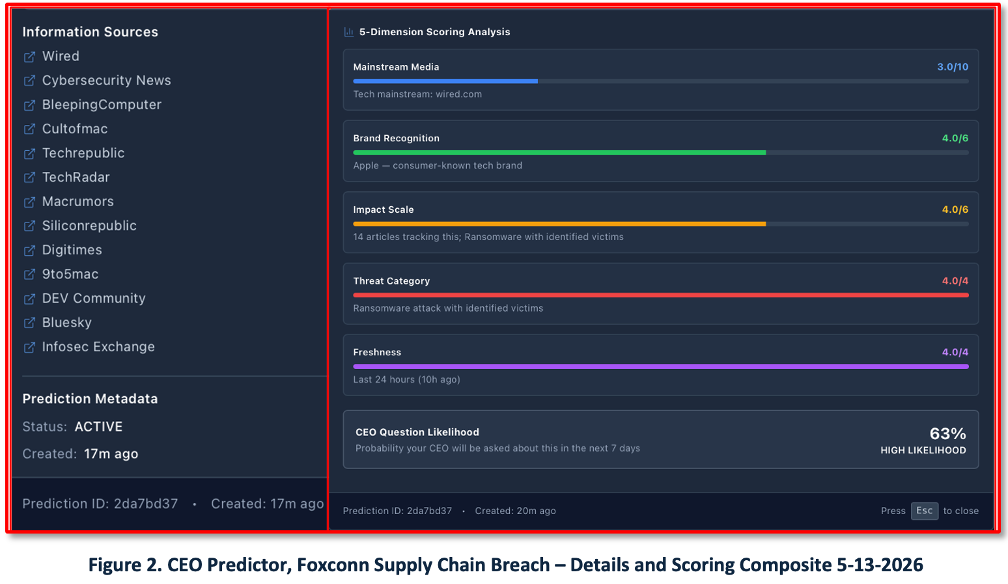

Figure 2 shows the Details and Scoring composite. Fourteen sources reported the event within 24 hours. The five-dimension scoring model evaluates mainstream media coverage, brand recognition, impact scale, threat category, and freshness – producing a transparent, auditable score that explains why an event is flagged as executive-relevant.

Outlook

Projecting two years in an environment this dynamic carries real uncertainty. While these observations are grounded in experience and current trajectory, they are not predictions.

AI is fundamentally changing cybersecurity. Known limitations, hype, and user resistance notwithstanding, continuous progress in AI capability and adversarial adoption compels security teams to keep pace – and to build systems and teams designed to be resilient.

CyberSec Centaurs, agentic systems, and recursive self-improvement will continue to advance. [16, 17] The division of labor between human judgment and machine execution will sharpen as models improve and organizations gain operational experience.

Information overload will intensify, driven significantly by AI-generated content. By mid-2025, an estimated 35% of newly published websites were already classified as AI-generated or AI-assisted. [18, 19] CISOs who invest in decision intelligence infrastructure now will have a structural advantage as that signal-to-noise ratio continues to deteriorate.

In response to documented workforce risks – burnout, cognitive surrender, cognitive offloading, and AI-washing – and drawing on the positive examples set by IKEA, EY, and IBM in workforce reskilling, CISOs and CEOs will invest more deliberately in employee resilience. [20–23] The Centaur operating model provides the framework for doing so.

Advisory and consulting demand for AI-cybersecurity services will see strong growth as organizations invest in training, resilience programs, and staff augmentation, particularly for GRC functions where human judgment and regulatory accountability cannot be delegated to a model.

NOTE:

- This White Paper was conceived, researched, and written by ThreatShare human analysts with AI research and editorial support.

- Blog image source1: Zscaler – The CISO’s Gambit podcast on Apple

- Blog image source2: Silicon Valley Software Group Centaur

References

- World Economic Forum – Unpacking Cyber Resilience WHITE PAPER, November 2024

- EY – Embracing cyber resilience: the shift from defense to endurance, , 13-Sept-2024

- World Economic Forum – Why taking a strategic approach to security is key for cyber resilience, 13-March-2025

- Breaking Defense – Firewalls won’t protect GeoINT Companies. Cyber resilience will, if we act now, 4-May-2026

- Viking Cloud – The Human Element in Risk-Based Security: Building a Culture of Cyber Resilience Date Published, March 25, 2025

- red.anthropic.com – Assessing Claude Mythos Preview’s cybersecurity capabilities, , 7-April-2026

- Google Threat Intelligence Group – GTIG AI Threat Tracker: Adversaries Leverage AI for Vulnerability Exploitation, Augmented Operations, and Initial Access, , 11-May-2026

- Palo Alto Networks – Weaponized Intelligence. By Nikesh Arora, 30-March-2026

- Breaking Defense – AI ‘blueprint’ coming soon to NGA to help ‘operationalize’ GEOINT, 7-May-2026

- Harvard Data Science Review (HDSR) – Effective Generative AI: The Human-Algorithm Centaur, 19-Dec-2024

- Kevin Roose and Casey Newton: Hard Fork, The New York Times – A.I.-Washing’ Layoffs? + Why L.L.M.s Can’t Write Well + Tokenmaxxing, March 20, 2026, podcast.

- Barron’s (via Apple Reader) – Why Palantir Can’t Stop Talking About AI Slop, 6-May-2026

- Forbes – 5 Ways To Prevent AI Use From Quietly Eroding Your Judgment, 1-May-202

- Harvard Business Review – What the Best AI Users Do Differently—and How to Level Up All of Your Employees, 19-March-2026

- Wired – Foxconn Ransomware Attack Shows Nothing Is Safe Forever, 12-May-2026

- arXiv:2512.22883v1 [cs.CR] Agentic AI for Cyber Resilience: A New Security Paradigm and Its System-Theoretic Foundations, 28-Dec-2025

- IEEE Spectrum – Can AI Really Build Better AI? 7-May-2026.

- The Impact of AI-Generated Text on the Internet, Dolezal, Jonas and Alam, Sawood and Graham, Mark and Bohacek, Maty. 2026

- The Atlantic – Did a Human Write This?, 1-May-2026

- Inc. – AI Is Boosting Productivity—but Data Shows Employee Workloads Are Getting Heavier, 13-March-2026

- EY – US AI Pulse Survey, , December 2025.

- Forbes – Leaders Automating Their Workforce Have Already Seen How This Ends, 30-April-2026

- Raise Summit – Workforce Transformation: Reskilling Strategies that Actually Work. 3-Feb-2026